Managing Your Digital Workforce: Control, Governance, and Guardrails for the Age of Agents

This article is not for teams evaluating agentic AI. It is for teams already running it in production: organisations with somewhere between forty and four hundred autonomous agents, most approved through informal channels, many interacting with systems that were never formally reviewed, and none of which appear in the organisation's Disaster Recovery plan.

What follows is an account of what breaks, why it breaks in ways that existing Site Reliability Engineering (SRE) playbooks don't cover, and how to build an architecture that gives your teams statistical control over a digital workforce that regulators, risk officers, and your own conscience can actually tolerate.

The hiring problem you never expected

When you deploy 100 AI agents into production, you are not deploying 100 features. You are running a Human Resources (HR) department. Agents should have managers. They should have scopes of authority. And when their decisions are wrong, somebody has to own that.

Your existing software governance model does not handle this. Traditional engineering failures are deterministic: a function received unexpected input, a race condition emerged under load. AI agents introduce semantic failure: the system did exactly what it was told, within all defined parameters, and it was still wrong. The Know Your Customer (KYC) agent approved a customer by every reasonable standard, yet the customer turned out to be a shell entity for a sanctioned party that only appeared on an Office of Foreign Assets Control (OFAC) list updated six hours after the agent last synced. No bug ticket covers that.

The first decision you need to make is your taxonomy of agency. We recommend a three-layer model, defined not by AI sophistication but by who is operating it and whether a human remains in the decision loop.

Layer 1: Individual cognitive augmentation

These are single-user agents: a skill in a developer's toolchain, an analyst's private research assistant, a document summariser a compliance officer runs interactively. Think of a developer-facing coding assistant that parses Securities and Exchange Commission (SEC) filings, or an agent that pre-structures a credit memo from raw data before a human reviews it. The human is present, engaged, and the final decision gate. The blast radius of a failure is bounded by the person using it.

Governance requirements are relatively light: data access scoping, audit logging, and a usage policy the user has acknowledged. The primary danger is not catastrophic failure. It is invisible drift: the analyst who has been trusting the agent's summaries for six months without validating them. That is a culture and training problem, and governance policy has to address it alongside the technical controls.

Layer 2: Human-in-the-middle process automation

These agents serve multiple users, operate across organisational boundaries, and execute multi-step workflows, with structured human checkpoints at consequential decision points. A KYC pipeline that gathers and scores customer data before routing to a compliance analyst for final approval. A loan origination chain that pre-populates a credit committee pack, with a human signing off before any commitment issues.

The critical design principle: human oversight is an architectural feature, not a User Interface (UI) afterthought. If the human checkpoint is a rubber stamp, meaning reviewers are approving outputs they cannot effectively evaluate in the time available, you do not have Layer 2. You have Layer 3 with better optics.

Layer 3: Fully autonomous execution

These agents act without a human in the decision loop between trigger and consequence. They are appropriate for a narrow class of tasks where the operational window for human review genuinely does not exist: real-time fraud intervention, automated regulatory reporting, and treasury rebalancing triggered by predefined market conditions.

Layer 3 is not a reward for mature AI programmes. It is a risk class that demands the most rigorous guardrail architecture and should be the smallest part of your agent footprint by deliberate design. Reserve it for decisions where human latency makes oversight structurally impossible (not merely inconvenient) and build deterministic circuit breakers that constrain the autonomous action space tightly. Layer 3 requires the architecture itself to carry accountability, because no named human does.

Reliability is a lie (and the math will prove it to your CFO)

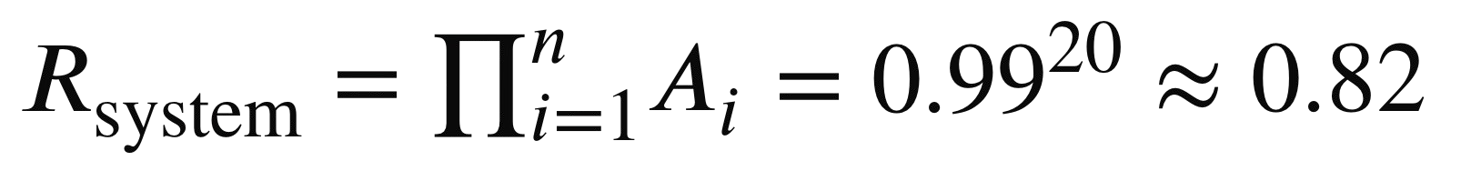

Individual agents look reliable. On well-bounded tasks, accuracy sits at 98%, 99%, sometimes higher. The problem surfaces when you chain them together. A sequential workflow where every step runs at 99% accuracy still fails roughly 1 in 5 times once you reach twenty steps:

A twenty-step chain is not exotic. A KYC orchestration workflow that gathers identity data, cross-references sources, performs entity resolution, scores risk, checks watchlists, formats output, routes to the correct review queue, and generates an audit record is easily twenty steps.

In traditional software engineering, an 18% failure rate on critical workflows would halt a release. In agentic AI, teams routinely ship this configuration because the failures are not immediately visible. They manifest as reconciliation exceptions days later, as a SAR that was never filed, as contradictory onboarding communications from two agents that never knew about each other. The fix is architectural, not operational. You cannot tune your way to reliable multi-agent workflows.

The architecture: Separating reasoning from orchestration

The pattern that resolves this is the strict separation of two concerns that most agent frameworks conflate: the Conductor and the Performer.

The Conductor is the orchestration layer, sitting closer to the deterministic end of the spectrum. Built on workflow state management and routing logic, it enforces what should happen, in what order, under what conditions, and with what error handling. Business logic lives here: routing rules, retry policies, circuit breakers, hard compliance thresholds, and escalation conditions. These must be legible to a human engineer and auditable by a regulator. The more deterministic this layer, the more predictable and auditable the system — but also the more rigid. A Conductor that anticipates every possible state is expensive to build and brittle when reality surprises it.

The Performer sits closer to the probabilistic end. This is where LLM-based agents operate, handling the work that resists deterministic encoding: interpreting unstructured content, reasoning about ambiguous inputs, classifying edge cases. Performer outputs are returned to the Conductor as recommendations or structured data and are never executed directly. The agent recommends; the orchestration layer decides whether that recommendation is within policy, has the required confidence score, and can be actioned given current system state. However, a Performer confined to read-only and reasoning operations is a limited one. The agent-as-worker analogy starts to break down when agents cannot write, trigger, or cause side effects — they become expensive summarisers rather than autonomous actors. Extending the Performer to include write operations and side effects increases its utility significantly, but it also loosens the Conductor's grip and reintroduces the unpredictability this architecture was designed to contain.

In practice, the line between Conductor and Performer is not a wall but a dial. Every implementation sits somewhere on the spectrum between full determinism and full autonomy, and the right position depends on the specific workflow, the regulatory context, and the organisation's tolerance for unpredictable outcomes. The goal is not to eliminate LLM stochasticity entirely but to contain it to the decisions where semantic reasoning genuinely adds value, while keeping the consequences of that reasoning under deterministic control. Each project needs to find its own balance — and to make that balance an explicit design decision rather than an emergent property of how the system was assembled.

The integration gap: When agents meet legacy systems

The most common production failure mode we have encountered is not an AI failure. It is an integration architecture failure that AI makes dramatically more frequent and expensive.

Agentic orchestration operates at a fundamentally different velocity and retry pattern than human-operated workflows. When an agent triggers an action against a legacy core system built for batch processing, the mismatch creates a failure class that existing monitoring often misses. The agent sends an instruction. The downstream system begins processing. The agent's timeout threshold, calibrated for modern microservices, fires. The agent retries. The downstream system receives the second instruction and processes it while also completing the first. The first signal is a reconciliation exception hours later.

For core payment processing and settlement, robust infrastructure for this already exists. The exposure is in new categories of workflow: KYC pipelines calling internal scoring APIs not designed for automated callers, document processing chains triggering downstream notifications through systems never load-tested at agent velocity, and reporting workflows writing to audit systems in patterns their designers never anticipated.

The right response is not to retrofit payment-grade reliability engineering onto every internal API. It is to design the agentic integration layer with explicit awareness of which downstream systems are idempotent, which have defined retry contracts, and which will behave unpredictably under automated retry pressure. Where the answers are unclear, the Conductor layer should enforce conservative, human-verified retry behaviour rather than aggressive automation.

The guardian paradox: Why "AI watching AI" is not a governance model

The instinct to address agentic risk by adding a supervisory AI layer is understandable. It is also wrong in its most common implementation.

When you deploy a Guardian Agent that monitors a Worker Agent, and both are grounded in the same base model and reasoning about the same domain, you have not added an independent control. You have added a correlated control. They will make the same semantic errors for the same reasons, and neither will flag the other when they do. Red-teaming exercises consistently show that same-family LLM guardrails fail to catch the most dangerous category of agent error: confident wrong reasoning. The agent produces a well-structured, internally consistent justification for an incorrect conclusion. The LLM guardian finds the reasoning persuasive, because it would have reached the same conclusion.

Dual-track validation

Track one is semantic, probabilistic, and AI-driven. An independent LLM, from a different vendor and different model family where feasible, reviews the primary agent's reasoning, intent, and output for coherence, plausibility, and alignment with stated policy.

Track two is deterministic, heuristic, and never LLM-based. A hard-coded validation suite of rule engines, compiled business logic, and query-based verification against the live system of record checks the math, validates permissions, and runs outputs against static compliance lists. An OFAC sanctions check should never be performed by a language model. A language model is probabilistic. The OFAC list is binary. You are either on it or you are not, and the system that checks should be incapable of finding nuance where none exists.

In practice: a KYC agent returns an "Approve" recommendation. Before it reaches a decision queue, the deterministic validator confirms the applicant is not on any watchlist, that the stated figures are arithmetically consistent with submitted documentation, and that the approving agent's credentials have active authorisation rights. Only if all deterministic checks pass does the semantic guardian review the reasoning quality. You do not trust the guardian because it is AI. You trust it because you can read exactly what it checked and why.

The shadow AI problem is already inside your perimeter

51% of employees have connected AI tools to internal work systems without the approval or knowledge of their Information Technology (IT) organisation (BlackFog survey, CIO.com, January 2026). Not used a tool: connected it, with real credentials, in ways your security team has no visibility into. 33% of employees admit to sharing enterprise research or datasets with those tools, 27% to uploading employee data, and 23% to inputting company financial information. In financial services, those three categories are regulatory exposure.

It does not stop at the individual contributor level. 69% of C-suite executives and 66% of directors and senior VPs say they prioritise speed over security when it comes to AI adoption. Shadow AI is not a rogue behaviour contained to junior staff. It is an organisational posture endorsed at the top, which makes it significantly harder to govern through policy alone.

You do not have a shadow AI adoption problem. You have a Non-Human Identity (NHI) sprawl problem that shadow AI has dramatically accelerated. Every AI agent, sanctioned or not, that connects to your systems is an identity. It has access rights. It generates logs nobody is looking at because nobody assigned accountability for that identity to a named human.

The exposure begins at the individual level. Layer 1 agents are easy to acquire, easy to connect to internal systems, and easy to run without IT visibility. The behaviour is not confined to sophisticated actors deliberately bypassing controls. It is individuals at every level reaching for convenient tools and connecting them, with real credentials, to real systems. An analyst pasting a client memo into an external AI tool, a developer whose coding assistant exfiltrates repository context to a third-party Application Programming Interface (API), a compliance officer uploading a draft Suspicious Activity Report (SAR) to a consumer chatbot for a quick summary: none of these feel like security incidents in the moment. Each of them is. This is why governance must address not just what sanctioned agents are permitted to do, but which tools are permitted at all, what data classifications they may handle, and whether their underlying infrastructure keeps that data within your control.

The governance architecture we advocate treats agents as you should treat employees.

The Agent Registry is the digital equivalent of your HR system. Every production agent has a registered identity: a defined owner who carries personal accountability, a defined scope of operations, a fixed expiration date after which credentials are automatically revoked unless explicitly renewed, and a defined escalation path for situations outside its operational envelope.

On-Behalf-Of (OBO) Authentication means agents do not hold persistent service account credentials. They operate with the scoped, session-bound permissions of the human user who initiated the workflow. This eliminates the privilege escalation attack surface: prompt injection cannot grant an agent access to systems the initiating human could not touch, and it creates a clean, auditable chain of delegation.

Governance-as-Code means every agent has a formal specification (its system prompt, tool access list, and permitted data sources) held in version control. Any change, including a three-word edit to a system prompt, triggers an automated red-teaming suite before the updated agent can be promoted to production. Significant behavioural regressions have emerged from what engineers describe as "minor clarifications." The regression catches itself in the pipeline, not in production at 2am.

The bill nobody budgeted for

Token costs are the closest thing to a hidden operational failure mode that agentic AI has produced. Here is what Unbounded Reasoning Debt looks like: an agent is given a complex task. It begins reasoning, produces an intermediate output, determines it is insufficient, and begins again. Seven iterations. Each costs money. The final output is marginally better than the first or, in the worst case, the agent enters a self-correction loop and never produces an output at all. Single runaway sessions can cost thousands of dollars. When this becomes a pattern, it is consistently classified as a monitoring gap. It is also an architectural deficiency.

The Cost-per-Outcome (CPO) model is the only financially responsible way to operate agentic AI at scale:

CPO = (Total Compute Cost + Infrastructure Overhead) / Successful Task Completions

Running this number honestly across the full production workload, including runaway sessions and failures, not just your best-behaved tasks, tells you whether you are running a viable operation or an interesting pilot that happens to generate Return on Investment (ROI) on a carefully selected subset.

Two architecture controls make CPO viable in practice.

Token Budgeting with Forced Escalation. Every agent session begins with a hard token allowance. If the agent reaches its budget without a validated output, the workflow escalates to a human queue with intermediate reasoning logged for review. This is not a system failure. It is the system working correctly: acknowledging the limits of autonomous reasoning and returning control to a human rather than burning resources on diminishing returns.

Model Routing on Task Complexity. Frontier models cost an order of magnitude more than smaller, purpose-trained models for tasks that do not require frontier capability. Classification, extraction, summarisation of well-structured documents, entity recognition, and routing decisions should run on smaller, faster, cheaper models, including on-premise deployments where data sensitivity demands it. Only genuine complex reasoning, such as legal document interpretation, multimodal analysis, and ambiguous entity resolution, should escalate to frontier capability. In production deployments using this approach, model costs have been reduced by 50–70% on appropriate task mixes with no measurable quality degradation. The exact figure depends on the proportion of complex versus routine tasks, but in financial services the economics tend to be favourable.

DORA is not a compliance problem. It is an architecture test.

The Digital Operational Resilience Act (DORA)'s Functional Equivalence mandate is an architectural requirement. You must demonstrate that your institution can continue to operate at functional parity if a critical third-party technology provider, including your AI model provider, becomes unavailable. If your agentic workflows depend on a single model vendor or a single cloud provider, and either experiences an outage, and you cannot demonstrate a tested failover path, you are non-compliant. Not procedurally. Architecturally. The resilience requirement extends across the full stack: model providers, cloud infrastructure, and the orchestration layer that connects them. An architecture that is model-agnostic but cloud-locked has solved half the problem.

By abstracting the interface between your orchestration layer and the underlying model, you decouple business logic from any specific provider. When this abstraction is designed in from the beginning, transitions between providers are measured in minutes. Retrofitting it is one of the most expensive architectural corrections a production agentic system can require.

The EU AI Act adds a second dimension: explainability as a first-class engineering requirement. When an agent flags a transaction for SAR filing, makes a credit recommendation, or approves a KYC application, regulators do not want the output. They want the reasoning trace: what the agent considered, in what order, with what intermediate conclusions, and why those conclusions led to the final output. This is not a logging requirement you can bolt onto an existing system. Retrofit attempts consistently produce incomplete traces that fail forensic review, because the reasoning that matters often happens in intermediate steps a bolted-on logger never sees.

On timing: DORA entered full application on 17 January 2025 and enforcement is already active. EU AI Act obligations for high-risk AI systems, which includes credit scoring, KYC, fraud detection, and any agent making consequential decisions about individuals, apply from 2 August 2026. If your current production systems cannot produce a complete, human-readable reasoning trace for any given decision, that deadline is not a future problem. It is a current architectural deficit with a fixed due date.

Why Beyond

Beyond is an agentic AI delivery partner. We build and operate agents across financial services and e-commerce environments: recommender agents that surface context-aware decisions at scale; explanatory agents that make AI outputs interpretable to compliance teams, customers, and regulators; procedure implementation agents that execute multi-step operational workflows in production systems; and engineering-enhancing agents that accelerate the development teams responsible for building and maintaining this infrastructure.

These are not proofs of concept. They are production systems running against real customer data, with real regulatory and commercial exposure. Beyond's practitioners bring more than fifteen years of experience at organisations including HSBC, Deutsche Bank, Lloyds Banking Group, and Santander. We are a Google Cloud Global Strategic Cyber Security Partner, with work spanning infrastructure modernisation, AI and data activation, and security.

The engagement model we propose is a stress test, not a capability assessment. Start with your highest-risk production workflow: a KYC pipeline, a multi-party approval chain, or an automated reporting workflow feeding regulatory submissions. We apply our Hybrid Guardian Layer, an independent LLM-based semantic reviewer combined with a hard-coded deterministic compliance engine, against that workflow in a parallel environment. The goal is to demonstrate concretely what it surfaces that current monitoring misses: the token cost breakdown, where the CPO number diverges from current estimates, and the reasoning traces your compliance team would need to respond to a regulator inquiry. Then you decide whether the architecture holds up.

Governance at scale

The governance architecture described in Section V is the right foundation. But architecture answers what controls exist. At institutional scale, a harder question emerges: who runs this, how does it hold together as the agent footprint grows, and what happens when the framework meets the organisational reality of a large financial institution? Five problems surface consistently.

The governance velocity problem

At 100+ agents with fifteen engineering teams pushing updates simultaneously, a single red-teaming suite applied uniformly to every change becomes a bottleneck. Teams route around it. The resolution is a tiered governance model that applies control proportionally to risk. Layer 1 agents move through a lightweight automated gate. Layer 2 agents require a full red-teaming pass and explicit sign-off from a named risk owner. Layer 3 agents require both technical sign-off and a formal governance review including the risk and compliance functions. The arbitration function for cross-team disputes is a dedicated AI Governance role, staffed by practitioners who understand both the technical architecture and the regulatory obligations, with a clear escalation path to the Chief Risk Officer for disputes that cannot be resolved at the technical level.

The agent-to-agent trust problem

OBO Authentication works cleanly for human-initiated workflows. It encounters a structural gap when agents spawn sub-agents. The governing principle is strict: delegation cannot exceed grant. A sub-agent can hold at most the permissions of the agent that spawned it, which can hold at most the permissions of the initiating human. No delegation event at any depth can add scope. Every spawning event is recorded in the Agent Registry as a delegation transaction. This must be enforced architecturally because the delegation chain must be structurally incapable of privilege escalation, not merely prohibited by policy. In a financial services context, a misconfigured delegation is not a permissions error. It is a potential regulatory breach.

The production model drift problem

Governance-as-Code catches behavioural changes at deployment. It cannot catch behavioural change that occurs without any change to the agent's code or configuration. Foundation model providers update their models, sometimes with announcement and sometimes without, and the same agent with identical configuration can produce materially different outputs six months into production. The mitigation is continuous behavioural monitoring: a regression suite against a defined set of canonical inputs on a weekly cadence, or triggered automatically by a vendor model version notification. Deviation beyond a defined tolerance triggers a hold state on the affected agent. Your AI governance function must maintain a model version registry, not just an agent registry, with a tested rollback path for each.

The succession and orphan agent problem

Named human ownership is essential and brittle in large organisations. People change roles, leave, get reorganised. When an owner becomes unavailable, agents under their ownership should enter a defined hold state, continuing in-flight workflows but unable to be updated or promoted until a new owner formally accepts accountability in writing. A monthly audit of ownership currency is a minimum requirement, and it should be automated at scale. Before any agent is promoted to production, the succession path must be documented in the Registry. An unowned agent operating a consequential financial workflow is not a theoretical risk.

The cross-domain governance federation problem

A Tier-1 financial institution is not a single governance domain. Retail banking, investment banking, treasury, and compliance operate under different regulatory regimes and risk appetites. A governance framework designed for a single domain fails when extended to the whole institution not because the framework is wrong, but because it was never designed for federation.

The resolution is a two-tier model. The institutional baseline defines the non-negotiable controls that apply everywhere unconditionally: OBO authentication, central Agent Registry, reasoning trace capture for any decision that may face regulatory scrutiny, and deterministic validation for any compliance-critical output. These are not subject to domain-level variation. Above the baseline, each domain maintains a governance layer calibrated to its specific obligations. Treasury may require additional controls on agents touching limit structures. Investment banking may apply more conservative KYC confidence thresholds. These are legitimate differences and governance federation must accommodate them, without creating divergence at the baseline level.

Where to begin

The gaps that matter most, in order of urgency:

- Deterministic validation for every compliance-critical decision output, immediately. OFAC checks, sanctions screening, and hard regulatory thresholds must not pass through a language model as their final validation gate. This week, not this quarter.

- Non-Human Identity audit. Inventory every AI agent in your environment, not just formally sanctioned ones, but every tool with an API key and access to an internal system. Assign each one an accountable human owner.

- Reasoning trace infrastructure for any workflow that may face regulatory scrutiny. Build it now, while the architecture is still malleable. Retrofitting is expensive and incomplete.

- Integration risk mapping for new agentic workflows. Before an agent touches a downstream system, understand that system's retry contract, idempotency characteristics, and behaviour under automated-velocity load. Treat this as a standard pre-production checklist item.

- Model-agnosticism, before your next AI contract renewal. Vendor lock-in to a single AI provider is a DORA compliance risk. Use the contract cycle to create the architectural space to move.

Statistical control over a digital workforce

The organisations that win in the agentic moment will not be the ones that deployed the most agents the fastest. They will be the ones that built the infrastructure to know, at any point in time, exactly what every agent is doing, why it is doing it, what it is permitted to do, and what will happen when it fails.

That infrastructure is deterministic orchestration layers, dual-track validation, governance-as-code, token budgets, and agent registries. It is the kind of engineering that does not make for a compelling conference keynote but that keeps production systems stable when the legacy core system times out, the agent retries, and the difference between a logged event and a workflow that ran twice is a single architectural decision you either made or didn't.

The question is not whether you need this architecture. The question is whether you build it before or after your next production incident.

Keep reading

More news and articles

Perspectives on transformation, technology, and what comes next